The Evidence Ledger

“Probable” Is Not a Legal Standard: Why Forensic Investigations Require a Different Kind of AI

There is a moment in every financial investigation when everything that came before it either holds or collapses.

It might be a deposition. A regulatory review. A courtroom exhibit handed to opposing counsel. At that moment, the question is not whether your analysis is sophisticated or your tools are modern. The question is whether every number, every transaction, every conclusion can be traced directly back to original evidence and defended line by line.

Courts and regulators do not operate on confidence intervals. They require traceable, source-backed conclusions.

The Tools Were Designed for a Different Problem

Walk into any accounting firm today and you'll find AI accelerating daily work. Generative tools draft memos and correspondence. OCR platforms extract data from bank statements and receipts in minutes. Reconciliation software matches transactions with impressive speed. These are genuine productivity gains, and they're appropriate for the work they were designed to do.

But forensic accounting operates under fundamentally different conditions.

Records may be incomplete, altered, or deliberately obscured. Transactions may be structured specifically to hide relationships. Undisclosed accounts are common. And when the investigation concludes, every finding must withstand scrutiny from judges, regulators, and opposing counsel trained to find exactly the kind of ambiguity that general-purpose AI leaves behind.

The popular AI tools in most accounting stacks are probabilistic systems. They are optimized to identify patterns, predict likely outcomes, and generate responses that are statistically plausible. That architecture makes them genuinely useful for routine, predictable work. It also means they estimate rather than verify, and infer rather than trace.

In a forensic context, that distinction is not a minor technical detail. It is a professional liability.

What Rules-Based Verification Actually Looks Like in Practice

The alternative to probabilistic AI in forensic work is not slower or more manual. It is architecturally different.

Rules-based verification means every match between a transaction and a source document is made by a deterministic algorithm, not a statistical inference. The system either finds the corresponding bank record or it does not. Every result is traceable. Every discrepancy is flagged. Every conclusion can be explained to a judge, because it follows from a rule that can be stated explicitly.

The practical impact of this distinction is significant.

In a recent investigation conducted by global consulting firm J.S. Held, the case involved approximately 20,000 transactions across 10 accounts over a five-year period, including nearly 1,000 check images, many of them handwritten. The volume and complexity would have required weeks of manual preparation under traditional methods.

Using a purpose-built verification platform, all financial data was processed within 24 hours. Checks were automatically matched to corresponding bank transactions. Transfer patterns across accounts were identified algorithmically, surfacing fund flows that manual sampling would likely have missed. The total investigation ran approximately one week from start to finish. The findings identified over $7 million in stolen funds, with every transaction linked back to its original source document.

Ken Feinstein, Managing Director at J.S. Held, described the impact directly: the platform made the work not just faster, but completely accurate — a distinction that matters enormously when findings must survive courtroom scrutiny.

The Right Question to Ask About Any AI Tool in Your Stack

AI adoption in forensic accounting is accelerating. An estimated 60% of forensic accounting firms already use AI-powered tools. That is not inherently a problem. The problem is adopting tools without asking the question that matters most for this work: Can this system explain exactly how it reached its conclusion?

If the answer is "it identified a pattern" or "the model determined it was likely," that is not sufficient for evidentiary work. If the answer is "this transaction matched this bank record based on these specific criteria, and here is the source document," that is a defensible finding.

The distinction is not about which vendor you use or how much processing power sits behind the tool. It is about whether the architecture of the system is compatible with the standard your work already demands.

Most general-purpose AI tools are not. That gap will not be closed by the next model update. It is a structural difference in how these systems are built and what they are built to do.

A Framework Worth Keeping

As you evaluate AI tools for investigative work, one question cuts through most of the noise:

Could I explain this conclusion to a judge?

Not summarize it. Not characterize it. Explain precisely how the system arrived at it, what evidence it matched, and what rule governed the match.

If the tool cannot support that explanation, it belongs in your productivity stack, not your evidentiary workflow.

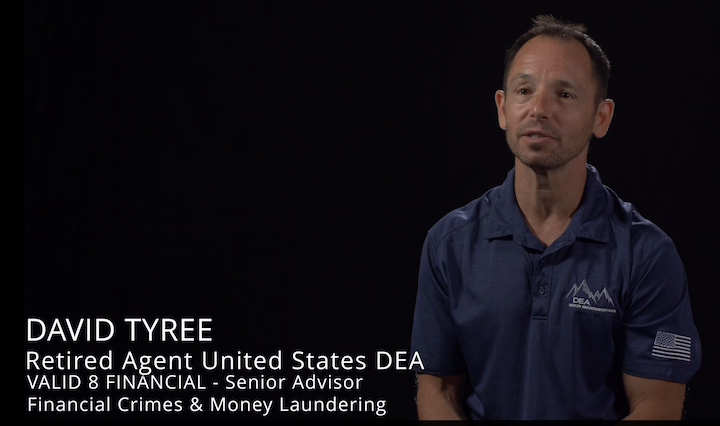

Valid8 Financial builds Verified Financial Intelligence (VFI) software purpose-built for forensic accountants, government investigators, and legal professionals who need financial evidence that holds up under scrutiny. Learn more at valid8financial.com.